The Engineer's Guide to Self-Supervised Learning

Table of contents

Learn what self-supervised learning is and how engineers can use it to train AI models with minimal labeled data. This guide explores key techniques, real-world applications, and the benefits of self-supervised learning in computer vision and machine learning.

Here is a quick look at the concept of self-supervised learning.

- What is self-supervised learning (SSL)?

A machine learning approach where a model creates its own labels from unlabeled data and learns by predicting parts of the input data from other parts. This bridges supervised vs unsupervised learning by requiring no human-labeled data while still training with a predictive (supervised-like) objective.

- How is self-supervised learning different from supervised and unsupervised learning?

Supervised learning uses labeled data (human-annotated ground truth) for training, while unsupervised learning finds patterns in unlabeled data without explicit labels. SSL lies in between: it doesn’t need external labels (like unsupervised), but formulates internal proxy tasks with pseudo-labels (like supervised) to train neural networks.

- Why do we need self-supervised learning?

SSL unlocks the ability to utilize vast amounts of raw data (images, text, audio) without costly labeling. It yields rich representation learning, improving model performance on downstream tasks (via transfer learning) and reducing the dependence on huge labeled datasets. In domains where labeled data is scarce or expensive (e.g. medical imaging, autonomous driving), SSL dramatically cuts down training data needs while boosting efficiency.

- What are some techniques and examples of SSL?

Popular self-supervised learning techniques include contrastive learning (e.g. SimCLR, MoCo for vision), masked prediction (e.g. masking words in sentences as in BERT, or image patches in Vision Transformers), and generative pre-training (e.g. GPT predicting next tokens). These methods let models learn visual and language features automatically. For instance, large language models (LLMs) like BERT or GPT are trained with self-supervision on text, and vision models use tasks like predicting a withheld part of an image.

- Where is self-supervised learning used?

SSL is widely used in computer vision (pre-training models for image classification, object detection, segmentation) and natural language processing (training powerful language models for translation, chatbots, etc.) without manual labels. It’s also applied in speech processing (e.g. models like wav2vec 2.0 learn from unlabeled audio). These self-supervised models achieve state-of-the-art results by leveraging unlabeled data and then fine-tuning on smaller labeled datasets for specific tasks.

Self-Supervised Learning (SSL) helps AI models to learn from unlabeled data by predicting missing or transformed parts of the input using the remaining data. In contrast, unsupervised learning trains models by identifying patterns in raw training data. Unlike supervised learning, SSL does not require human-labeled data, making it useful for domains with limited labeled data.

In this guide, we'll explore what self-supervised learning is and how it bridges the gap between supervised vs unsupervised learning. We'll dive into the core techniques (from contrastive learning to masked modeling) that make SSL possible and look at real-world applications in vision and NLP.

Finally, we'll also discuss the advantages that make SSL appealing and show you how you can use LightlySSL for your own computer vision projects. Let’s begin.

What is Self Supervised Learning?

Self-supervised learning (SSL) is a machine learning paradigm where models learn representations from unlabeled data by defining and solving proxy tasks (pretext tasks) that generate supervisory signals from the data itself.

In simpler terms, SSL enables models to train without manual labels by using parts of the input data to predict other parts, creating a structured learning problem from raw data.

Unlike supervised learning, which relies on human-annotated labeled data, SSL automatically generates pseudo-labels from unlabeled data, turning unsupervised learning into a structured predictive task. This is done by setting up an auxiliary task (e.g., predicting missing words in a sentence, distinguishing augmented views of the same image, or reconstructing masked image patches). The representations learned through these tasks can then be transferred to downstream applications like object detection, speech recognition, or language modeling.

Importantly, SSL typically involves a two-phase approach:

1. Pretraining (on a pretext task): Define a proxy task using the raw input data. The model (often a deep neural network) is trained to solve this task, thereby learning intermediate representations of the data.

2. Fine-tuning (on a downstream task): The learned representations are then used as a starting point for a real task (with labels) like image classification or named entity recognition, usually resulting in better performance with less labeled data.

SSL addresses some key pain points of building a machine learning model:

- Label scarcity and cost: Annotating large datasets is expensive and time consuming (think of annotating millions of images or hours of video). In SSL, the model learns directly from raw data, reducing reliance on labeled examples.

- Label bias: Supervised models can inherit biases from annotations. In SSL, since the supervision comes from the data, it learns more intrinsic features for better generalization.

- Unsupervised signals with supervised benefits: SSL effectively generates many supervisory signals from unlabeled data, providing feedback to train deep networks. This feedback loop is absent in vanilla unsupervised learning.

How Self-Supervised Learning Works (Techniques and Examples)

Designing a self-supervised learning algorithm means coming up with a pretext task that helps

The ML model to learn meaningful features from the data. The goal is to set up a task whose solution requires understanding the structure of the input.

Here are a few core techniques that have become popular in SSL for creating pretext tasks:

1. Contrastive Learning

In contrastive learning, the model focuses on learning features by distinguishing two contrasting data points. The models learn to cluster similar data points in the embedding space while pushing apart dissimilar ones.

Example: SimCLR and MoCo for computer vision, SimCSE for NLP.

How it works: A prominent example of contrastive learning is SimCLR. Here, given an image, multiple augmented versions are created. The model learns to associate different augmentations of the same image while separating them from other images. The key idea is that by learning this distinction, the model learns general features that can be used for other tasks.

2. Masked Modeling

In this technique, parts of the input data are hidden or “masked”. The model’s pretext task is to predict the missing parts.

Example: BERT (NLP) and Masked Autoencoders (MAE) for computer vision.

How it works: In MAE, portions of the input image are masked and the model learns to predict and create the missing pixels. This helps the model to understand the underlying structure of the image.

3. Generative Self-Training (Autoregressive Models)

Here the models predict the next data point in a sequence based on previous ones. By modeling the probability distribution of the data, these models generate high-quality samples that follow the learned patterns.

Example: GPT (NLP), PixelCNN (Computer Vision), WaveNet (Speech)

How it works: The PixelCNN captures spatial dependencies of each pixel and generates images pixel by pixel. This approach is specially useful in applications such as text completion, image synthesis, and speech generation.

4. Autoencoders

Here the model encodes the input into a lower dimensional representation (latent space) and then tries to decode it back to the original form in order to learn the features.

Example: Variational Autoencoders (VAE) (Computer Vision)

How it works: In VAE, the model first compresses the input into a latent space and then tries to reconstruct it back. This way the model learns to capture the most significant features of the data. VAE can be used for tasks like anomaly detection or generating new samples.

5. Clustering-based Methods

In clustering based SSL, the model learns to map data points into groups, or pseudo labels and then fine-tune it.

Example: DeepCluster (Computer Vision)

How it works: The model initially learns representations of the data points. Then uses them to cluster using clustering algorithms like k-means. The model is fine-tuned by using the cluster assignments as pseudo-labels. This process helps the model learn better representations by allowing it to discover inherent structures within the data.

6. Predictive Modeling

In predictive modeling, the model predicts parts of an input data from other parts, effectively generating its own labels from unlabeled data.

Example: Contrastive Predictive Coding (Computer Vision, Audio), Temporal contrastive learning for video prediction (Computer Vision)

How it works: In temporal contrastive learning, the model learns to predict future frames of a video given previous frames. By predicting the temporal dynamics of the video, the model learns about the motion and relationships between objects over time.

Across all these techniques, the common theme is representation learning: the model is encouraged to build an internal representation of the input that captures useful factors of variation, because that’s what it needs to solve the pretext task. These representations can later be used for actual tasks of interest. In practice, engineers choose an SSL method that makes sense for their data and domain.

Applications in Computer Vision and NLP

Self-supervised learning has had a profound impact on major AI fields like computer vision and natural language processing.

Here we highlight how SSL is applied in these areas, along with some industry and research examples:

1. Computer Vision Applications

In computer vision, SSL is used to pretrain deep models on large image or video datasets, so that they can then be fine-tuned for tasks like object detection, image classification, segmentation, and more with far fewer labeled examples than traditionally needed. Some notable applications:

- Image Feature Pretraining: Self supervised methods like contrastive learning (SimCLR, MoCo) and masked modeling (MAE) help models learn rich visual representations from unlabeled images. These features improve the model’s performance in downstream tasks like classification, object detection, and segmentation.

- Image Generation & Inpainting: Models like Masked Autoencoders (MAE) and autoregressive models (PixelCNN, Image-GPT) reconstruct missing image regions, useful for image editing and restoration.

- Video Understanding: This technique helps the model learn the motion patterns, temporal relationships and action recognition in the video. Techniques like temporal contrastive learning and masked frame prediction help models in applications such as video classification and activity recognition.

- Spatial Understanding and Navigation: By helping the model learn the spatial representations from images and depth data, SSL is quite useful in robotics and autonomous systems. By predicting missing parts of a scene or learning object relationships, models improve in navigation, localization, and scene understanding.

- Medical Imaging: SSL is widely used to pretrain models on large-scale medical datasets, learning features from X-rays, MRIs, and CT scans. This reduces reliance on expert-labeled data which is hard to curate at a larger scale but needed to train robust ML models for disease detection, segmentation and anomaly identification.

💡Pro Tip: When applying self-supervised learning to histology or biomedical imaging tasks, check out our Lightly-Train Histology Benchmarks to see how domain-specific pretraining improves downstream performance.

2. Natural Language Processing Applications

The common SSL techniques used in NLP are:

- Text Representation and Transformers: Models like BERT and RoBERTa use masked language modeling (MLM) to learn deep contextual embeddings. This improves tasks such as sentiment analysis, text classification, and question answering. These models capture word relationships and semantic meanings without labeled data.

- Autoregressive Language Models: GPT models predict the next token in a sequence, making them powerful for text generation, dialogue systems, and code completion. They learn coherent sentence structures and generate human-like text by training on large text corpora.

- Named Entity Recognition (NER) & Information Retrieval: SSL pre training improves entity recognition and search capabilities, making it easier to extract and retrieve information from large text corpora.

- Multilingual and Low-Resource NLP: Techniques like mBERT and XLM-R learn cross-lingual representations, making them effective for machine translation, low resource language processing, and multilingual search.

3. Other Domains and Multi-Modal SSL

Beyond CV and NLP, self-supervised learning is being applied in other areas:

- Speech Recognition: SSL techniques like Wav2Vec 2.0 allow models to learn speech representations directly from raw audio, reducing the need for transcribed data. These models improve automatic speech recognition (ASR) and spoken language understanding.

- Autonomous Vehicles & Robotics: SSL helps self-driving cars and robots learn from multimodal sensory data (images, LiDAR, text). Models trained with SSL improve object recognition, spatial understanding, and decision-making in dynamic environments.

- Multimodal Learning: Multimodal self-supervised learning enables models to understand and generate information across different data types, such as images and text. CLIP learns joint representations by training on image-text pairs, making it useful for tasks like cross-modal retrieval and zero-shot classification. Flamingo, on the other hand, combines vision and language understanding to generate accurate captions and answer visual questions (VQA) with minimal labeled data.

💡 Pro Tip: Check out Top Computer Vision Tools for ML Engineers in 2025.

Advantages and Limitations of SSL

While SSL reduces dependency on labeled data, and improves generalization, it also introduces computational challenges and it is difficult to optimize. So let’s look at the limitations and advantages of using self supervised learning technique for building machine learning models:

Advantages of Self-Supervised Learning

- Reduces Label Dependency

This technique effectively reduces the need for large-scale labeled datasets as the primary goal is to learn from raw, unlabeled data. This is especially useful in domains like medical imaging, where expert annotations are expensive and time-consuming, or in NLP, where manual labeling is infeasible for large corpora.

- Efficient Data Utilization

SSL extracts meaningful patterns from a large amount of unlabeled data available in text, images, audio, and video. Unlike supervised learning, which discards data without labels.Hence, this improves representation learning and performance on low-data tasks.

- Improves Generalization

The pretrained SSL models learn structured representations that generalize well to downstream tasks. For instance, models like BERT in NLP and SimCLR in vision learn feature embeddings that can be fine-tuned with minimal labeled data, improving performance across diverse applications.

- Works Across Modalities

It extends beyond NLP and vision to speech, robotics, and multimodal learning. Models like Wav2Vec (speech) and CLIP (image-text) demonstrate SSL’s ability to learn from different data types and bridge gaps between modalities for cross-domain applications.

- Enables Zero-Shot and Few-Shot Learning

Pretrained SSL models can perform zero-shot and few-shot learning by leveraging learned representations. CLIP, for example, enables zero-shot image classification by mapping text descriptions to images without fine-tuning on labeled examples.

Limitations of Self-Supervised Learning

- High Computational Cost

Training SSL models requires significant computational resources. Techniques like contrastive learning and masked modeling often involve large-scale training on billions of samples, demanding high-memory GPUs/TPUs. Training BERT or Vision Transformers (ViTs) with SSL often takes weeks on dedicated hardware.

- Complex Training Objectives

SSL methods rely on sophisticated loss functions and augmentations. Contrastive learning requires hard negative mining and large batch sizes, while masked autoencoders need careful token masking strategies. These complexities make SSL harder to optimize than supervised learning.

- Data Bias and Representation Issues

SSL models can inherit biases from the raw training data. If the training dataset contains imbalances or harmful biases, the model will propagate these issues into downstream tasks. This is a critical concern in applications like facial recognition, where biased representations can lead to unfair predictions.

- Lack of Interpretability

Since SSL models learn representations on their own, understanding what the model has learned is challenging. Unlike supervised models where outputs can be traced to labeled data, SSL representations are abstract and harder to interpret. This makes debugging difficult.

- Task-Specific Fine-Tuning Still Required

While SSL reduces the need for labeled data, most real-world applications still require fine-tuning on task-specific datasets. For example, BERT’s pretraining alone is insufficient for sentiment analysis. It requires additional labeled data to specialize in the task.

Latest Breakthroughs & Trends in SSL (2025 and Beyond)

Multi-Modal Self-Supervised Learning

- Cross-modal integration: New architectures effectively combine text, image, audio, and video understanding within a single model.

- Embodied intelligence: SSL models trained on multimodal data from robotics show progress in learning physical tasks.

💡 Pro tip: Learn more by reading A Brief Introduction to Vision Language Models.

Architectural Improvements

- Mixture-of-experts (MoE): Sparse activation techniques enable scaling model capacity while minimizing computation.

- Retrieval-augmented generation: Integrating external knowledge bases in SSL models reduces hallucinations in generated outputs.

- New self-supervision architectures: Yann LeCun's JEPA and Meta AI's I-JEPA (images) and V-JEPA (video) predict hidden data in latent space, capturing abstract, semantic features instead of pixel-level details.

Improved Efficiency

- Parameter-efficient fine-tuning: Methods like LoRA, adapter modules, and selective updates reduce computational costs for fine-tuning large models.

- Knowledge distillation: Smaller, specialized models now capture much of the performance of larger SSL models with lower computational cost.

SSL in New Domains

- Scientific SSL: SSL models tailored for molecular biology, chemistry, and materials science show expert-level performance.

- Medical imaging: SSL pre-training on medical datasets enables few-shot adaptation for rare conditions and diagnostic tasks.

Democratization of SSL

- Better access to training methods and pre-trained models for resource-constrained environments.

- Open-source alternatives to proprietary SSL models are gaining traction.

Continuous Learning

- Models are now build which update representations or the features incrementally with new data.

- This autonomously identifies and addresses knowledge gaps.

💡 Pro tip: Check out A Guide for Active Learning in Computer Vision.

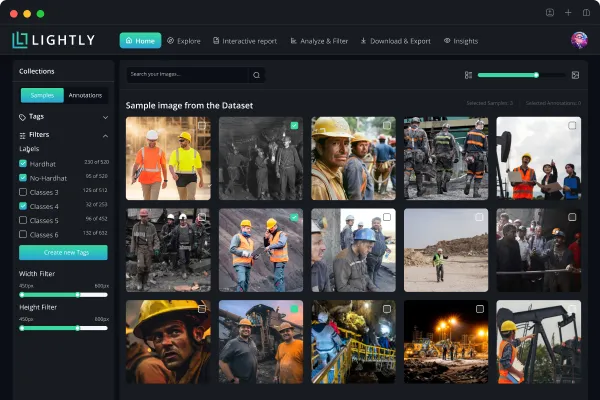

LightlySSL in Action

The LighlySSL provides a simple framework for self-supervised learning, focusing on images. It is a comprehensive tool to implement SSL and build an efficient AI model with unlabeled data. It offers tools for dataset curation, pre-training models for embeddings generation, and support for custom backbone models for SSL pre-training.

Key Features

- Modular framework: Exposes low-level building blocks, including loss functions and model heads, for flexibility and customization.

- Model Training: Supports SSL methods like SimCLR and MoCo for tasks such as image classification and segmentation.

- Lightweight Design: Designed to be easy to use, with a syntax and structure similar to PyTorch.

- Embedding Generation: Generates embeddings from datasets for use in downstream tasks or fine-tuning.

- Custom backbone support: Allows integration of custom backbone models for self-supervised pre-training.

- Distributed training: Supports distributed training using PyTorch Lightning, enabling efficient scaling across multiple GPUs and nodes.

Conclusion

In this blog, we saw briefly how Self-supervised learning is changing the game by letting models learn from massive amounts of unlabeled data. With techniques like contrastive learning and multimodal training, SSL is making huge strides in fields like computer vision and NLP. While it cuts down on the need for labeled data and boosts efficiency, there are still challenges in scaling and interpretability. But with ongoing advancements, SSL is set to take on even more complex problems.

For engineers eager to dive deeper into SSL, here are a few resources to continue learning:

Research Papaers

- A Cookbook of Self-Supervised Learning

- Self-supervised learning: The dark matter of intelligence

- A Simple Framework for Contrastive Learning of Visual Representations

- Masked Autoencoders Are Scalable Vision Learners

- ALBERT: A Lite BERT for Self-supervised Learning of Language Representations

- Emerging Properties in Self-Supervised Vision Transformers

- Supervised Contrastive Learning

- wav2vec 2.0: A Framework for Self-Supervised Learning of Speech Representations

- Barlow Twins: Self-Supervised Learning via Redundancy Reduction

Blogs and Tutorials

- The self-supervised learning cookbook

- Self-Supervised Learning | Yann LeCun's Tutorial at NeurIPS

- Self-Supervised Learning Guide: Super simple way to understand AI

Open Source Code

Communities

See Lightly in Action

Curate and label data, fine-tune foundation models — all in one platform.

Book a Demo

Stay ahead in computer vision

Get exclusive insights, tips, and updates from the Lightly.ai team.

.png)

.png)