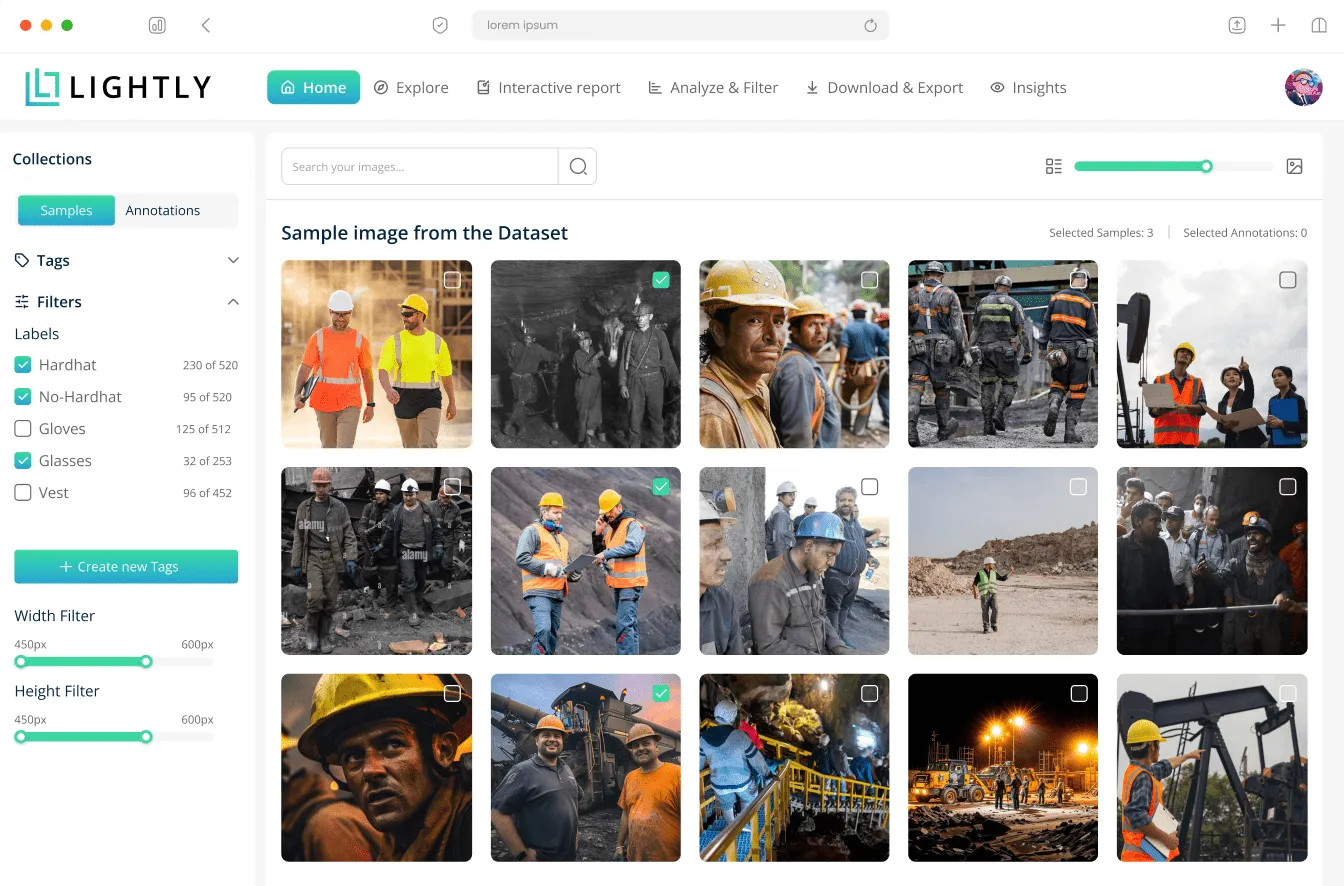

Computer Vision Suite For

Lightly helps ML teams build better vision systems — from data curation to model pretraining, fine-tuning, and AI edge deployment. Build production-ready AI faster.

Trusted by enterprises, researchers and startups.

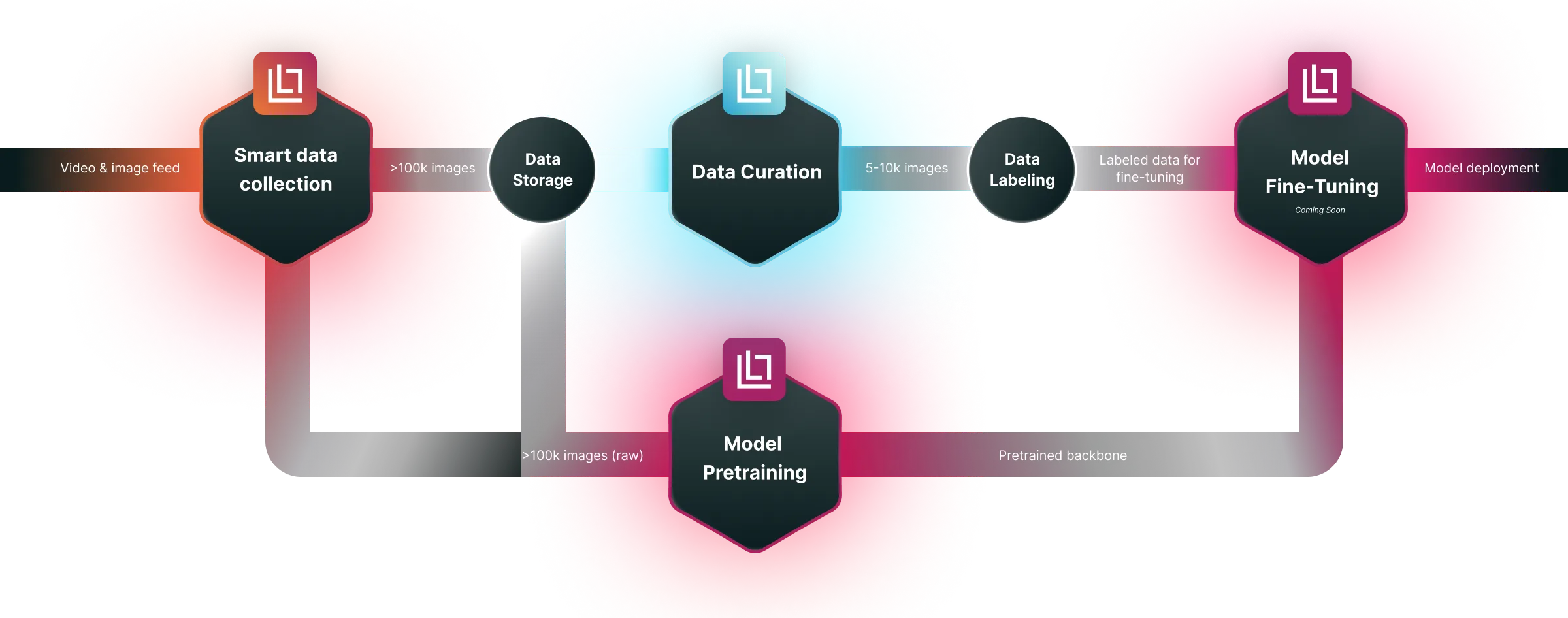

Fast & Easy Integration Into Your ML Pipeline

Lightly suite of tools integrates into your existing machine learning pipeline, facilitating data curation, model training, and deployment processes.

Explore Lightly Products

LightlyTrain

Self-Supervised Pretraining

Leverage self-supervised learning to pretrain models

LightlyServices

AI Training Data for LLMs & CV

Expert training data services for LLMs, AI Agents and vision

Built for solving data and model challenges across industries.

Lightly supports teams working with complex visual data—from raw collection to training-ready datasets and edge deployment.

Computer Vision Open Source Tools You’ll Actually Use

We built Lightly’s open-source tools to help engineers move faster — and smarter — with vision data.

Commited to data security

Lightly maintains high security standards, ensuring data integrity and confidentiality for entreprise-grade machine learning operations.

ISO 27001

Lightly is ISO 27001 certified, ensuring the confidentiality, integrity, and availability of your data through robust information security management.

GDPR

Lightly is fully compliant with the General Data Protection Regulation (GDPR), protecting user privacy and upholding strict data handling standards.

Ready to Transform Your Computer Vision Projects?

Join the growing community of ML engineers using Lighlty.ai to build efficient, accurate computer vision systems form data curation to deployment

.webp)

.svg)

.webp)