Pretraining vs. Fine-tuning: What Are the Differences?

Table of contents

What’s the difference between pretraining and fine-tuning in machine learning? This article breaks down the key concepts, use cases, and trade-offs of each approach—helping you understand when to use pretrained models and how fine-tuning tailors them for specific tasks.

Here is the key information on pretraining and fine-tuning.

- What is the meaning of pre-training? Pre-training is the initial stage in language model development where a neural network is exposed to a broad dataset to develop a generalized understanding of linguistic structures, patterns, and semantics. The primary goal is to create a model capable of generating language for many purposes, making it adaptable for future specialized tasks.

- Why is it called pre-training? It is called pre-training because this stage occurs before any task-specific training begins. The pre-training phase builds foundational knowledge and general patterns across the model's parameters — the foundation on which fine-tuning builds.

- What does pre-trained mean? A pre-trained model is a neural network that has already been trained on large, diverse datasets to learn general patterns — grammar, syntax, visual structure — without optimization for any single task. Such a model can be adapted to many downstream tasks through transfer learning.

- What is the purpose of fine-tuning? Fine-tuning is the process of taking a pre-trained language model and further training it on a specific dataset to tailor its behavior for a particular task or domain. Fine-tuning adapts pre-trained models for domains such as legal analysis, medical diagnosis, or customer support — aligning model outputs with business objectives.

Understanding the difference between pre-training vs fine-tuning is critical for choosing the right approach across various applications — from training LLMs to deploying AI models in real world applications.

Pre-Training vs. Fine-Tuning: What Are the Differences?

What is the meaning of pre-training? Pre-training is the initial stage in language model development where a neural network is exposed to a broad dataset to develop a generalized understanding of linguistic structures, patterns, and semantics. The primary goal is to create a model capable of generating language for many purposes, making it adaptable for future specialized tasks.

Why is it called pre-training? It is called pre-training because this stage occurs before any task-specific training begins. The pre-training phase builds foundational knowledge and general patterns across the model's parameters — the foundation on which fine-tuning builds.

What does pre-trained mean? A pre-trained model is a neural network that has already been trained on large, diverse datasets to learn general patterns — grammar, syntax, visual structure — without optimization for any single task. Such a model can be adapted to many downstream tasks through transfer learning.

What is the purpose of fine-tuning? Fine-tuning is the process of taking a pre-trained language model and further training it on a specific dataset to tailor its behavior for a particular task or domain. Fine-tuning adapts pre-trained models for domains such as legal analysis, medical diagnosis, or customer support — aligning model outputs with business objectives.

Understanding the difference between pre-training vs fine-tuning is critical for choosing the right approach across various applications — from training LLMs to deploying AI models in real world applications.

What is Pre-Training?

Pre-training is the process of training a large neural network on large amounts of unlabeled data across large datasets before any task-specific adaptation. The model develops a broad understanding of language or visual data, learning to recognize general patterns without explicit supervision.

Pre-training allows the model to achieve optimal performance faster than training from scratch, because the pre-training process provides a head start in understanding which parameters yield good results. The resulting pre-trained model carries foundational knowledge that can then be applied to many tasks through transfer learning.

Pre-training work typically involves self-supervised learning, allowing models to learn from unlabeled data by predicting missing parts of that data. This is how large language models build broad capabilities without requiring labeled examples at the pre-training stage.

Such a model — trained on a strong foundation of diverse data — is not focused on one task. Instead, it develops versatile representations that transfer to many specific tasks and various applications.

Pre-Training Activities and Techniques

Key pre-training activities in language and vision models include:

- Masked Language Modeling (MLM): Random tokens are masked, and the model is trained to predict them. This teaches the model contextual word relationships and is central to models like BERT.

- Next Token Prediction: The model learns to generate language token by token given all preceding tokens. This pre-training approach underlies GPT-style large language models.

- Masked Image Modeling (MIM): The model reconstructs masked image regions, learning visual representations without labels from raw data.

- Contrastive Learning: The model groups similar inputs together and separates dissimilar ones across large paired image-text corpora.

.png)

Pre-Training in Practice

Training LLMs requires pre-training on trillions of tokens. Qwen3 (2026) was trained on approximately 36 trillion tokens spanning 119 languages. A single pre-trained LLM can serve many fine-tuned variants across specific industries — making the pre-train-then-adapt paradigm highly economical.

Training the model at this scale requires weeks on thousands of GPUs. Most teams start from an existing pre-trained model rather than pre-training from scratch.

.png)

What is Fine-Tuning?

Fine-tuning adapts a pre-trained model to specific tasks using smaller datasets, refining the model's knowledge rather than replacing it — reducing data and compute compared to training from scratch.

Fine-tuning is highly efficient and cost-effective. Adapting a machine learning model to domain-specific tasks is critical when high quality data is available but limited in scope. According to a survey by Stanford University, 95% of NLP models used transfer learning — the technique underpinning fine-tuning — reducing training time and improving model accuracy across natural language processing (NLP) tasks.

Fine-tuning on a specific dataset enables a pre-trained model to follow instructions, improve model outputs, and address specialized use cases such as answering questions in legal analysis, medical coding, or customer support contexts. This is how a general-purpose model aligns with specific business objectives that task-specific applications demand.

Fine-tuning can also help the model adapt to new data that emerged after pre-training, making it valuable for keeping AI models current without retraining from scratch.

The Fine-Tuning Process

The fine-tuning process involves training a pre-trained model on task-specific data using supervised learning. The model's parameters are updated to minimize loss on the target task while preserving the general knowledge acquired during pre-training.

High quality data is more important than volume: training the model on a few hundred curated examples often outperforms training on thousands of noisy examples. Techniques like Supervised Fine-Tuning (SFT) refine the pre-trained model to improve performance using labeled data, while the model develops task-specific representations suited to the target application.

.png)

SFT vs. PEFT: What's the Difference?

Supervised Fine-Tuning (SFT) updates all or most of the model's parameters on labeled task-specific data. SFT is the standard fine-tuning approach and delivers strong results, but requires significant compute for large models.

Parameter-Efficient Fine-Tuning (PEFT) updates only a small subset of parameters — typically 0.1%–1% — leaving most pre-trained weights frozen. Techniques like LoRA and QLoRA achieve near-full performance on many tasks at a fraction of the cost. Fine-tuning often uses techniques like LoRA or PEFT to update only a subset of parameters, making task-specific fine-tuning practical on consumer hardware.

Both SFT and PEFT are widely used in production. Fine-tuning offers high accuracy and efficiency but carries a risk of overfitting or inheriting biases from the base model. The right method depends on available compute and the breadth of tasks the model needs to cover.

.png)

Pre-Training vs. Fine-Tuning: Key Differences

Pre-training focuses on developing a generalized understanding of language and visual patterns, while fine-tuning tailors this understanding to specific tasks or applications.

The pre-training phase uses large, diverse datasets to build foundational language capabilities, whereas fine-tuning uses labeled data to enhance the model's performance on specific tasks. Pre-training is essential for creating a versatile machine learning model that handles various language tasks; task-specific fine-tuning then optimizes it for particular applications such as sentiment analysis or customer support.

Rather than building a separate model for one task from scratch, teams can fine-tune a pre-trained model to be focused on one task — saving significant time and compute. One challenge of pre-training is that it may not yield strong results if the fine-tuning dataset is from a completely different domain. This mismatch can limit how effectively the model applies its knowledge to new tasks.

What is Pre-Training and Post-Training?

Pre-training builds the model's broad knowledge from unlabeled data. Post-training — which includes supervised fine-tuning, instruction tuning, and preference optimization — adapts that knowledge to desired behaviors on new tasks.

The four main training stages in modern LLM pre-training and adaptation are: (1) pre-training on broad unlabeled corpora, (2) supervised fine-tuning on labeled task data, (3) instruction tuning to teach the model to follow instructions, and (4) preference optimization (RLHF or DPO) to align with human values.

Pre-Training, Fine-Tuning, and RAG

Retrieval Augmented Generation (RAG) is a third approach distinct from both pre-training and fine-tuning. Rather than updating neural network parameters, RAG connects a pre-trained model to an external knowledge base at inference time, retrieving relevant documents to supplement model outputs.

Task-specific training is better when the model needs to internalize domain-specific patterns. RAG is better for access to frequently updated or proprietary information without retraining the model. Pre-training from scratch is justified only when no suitable pre-trained model exists for the domain.

Computer Vision Applications

Pre-training and fine-tuning are as fundamental to computer vision as to natural language processing (NLP). Vision models pre-train on large image and video datasets, developing broad visual representations, then adapt to detection, segmentation, or depth estimation tasks.

Autonomous Driving

Self-supervised pre-training enables vision models to learn geometry and scene structure from unlabeled video. DepthPro (2024) showed how a pre-trained ViT backbone achieves zero-shot metric depth estimation by training on diverse datasets of real and synthetic imagery, improving generalization across tasks.

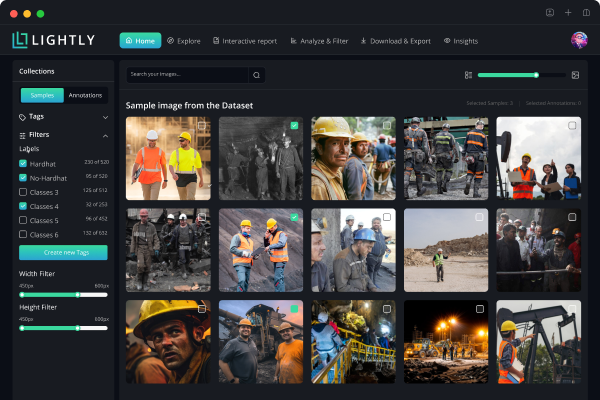

LightlyTrain supports self-supervised pre-training and model adaptation for domain-specific vision models including YOLO (v8, v11), ViTs, RT-DETR, and ResNet, with no labeled data required at the pre-training stage.

As seen in DepthPro (Bochkovski et al., 2024), the field now leverages pretrained vision transformers for transfer learning, allowing for increased flexibility in model design. This approach uses a combination of pretrained ViT encoders: a multi-scale patch encoder for scale invariance and an image encoder for global context anchoring.

Embodied AI and Document Understanding

For robotics, a pre-trained vision-language model provides the reasoning foundation while task-specific training on demonstration data produces a deployable system. DeepMind SIMA 2 (2025) and NVIDIA GR00T N1 both follow this pattern, building on pre-trained model foundations for complex physical tasks.

For document understanding, models like LayoutLLM (CVPR 2024) and DocLayLLM (CVPR 2025) combine a pre-trained language model with layout-aware training on labeled data. LightlyStudio provides embedding-based data curation and active learning tools for building high quality data pipelines before model training.

"LayoutLLM: Layout Instruction Tuning with Large Language Models for Document Understanding" by Luo et al. (CVPR 2024) introduces LayoutLLM, a method that enhances document comprehension by integrating large language models (LLMs) with layout-specific instruction tuning. This approach addresses the challenge of effectively utilising document layout information, which is crucial for accurate document understanding.

To capture the structural nuances of documents, LayoutLLM employs a pretraining strategy that focuses on three levels of information:

- Document-Level Information: This level captures the overall structure and organisation of the document.

- Region-Level Information: This level focuses on specific sections or regions within the document.

- Segment-Level Information: This level examines individual segments or blocks of text within the regions.

LayoutLLM also introduced a novel module named LayoutCoT, which enables the model to focus on regions relevant to a given question. This enhances the model's ability to generate accurate answers by directing attention to relevant sections of the document. Additionally, LayoutCoT provides interpretability, allowing for manual inspection and correction of the model's reasoning process. By training on document-level, region-level and segment-level tasks, LayoutLLM develops a hierarchical understanding of document layouts.

💡Pro tip: When evaluating hardware for different training stages, our Apple M1 and M2 Performance for Training SSL Models article clarifies how SSL workloads perform on lightweight devices.

Advantages and Limitations

Accessibility: Pre-training requires massive compute — weeks on thousands of GPUs for large amounts of data. Fine-tuning a pre-trained model is far more accessible: PEFT methods like LoRA enable training fine-tuning runs on a single consumer GPU in hours, making high-performance AI practical for teams without large infrastructure.

Data efficiency: Pre-training needs large datasets of diverse unlabeled examples. Fine-tuning achieves strong results with smaller datasets — sometimes a few hundred curated examples — compared to the large amounts needed to pre-train. This is a core advantage of the pre-train-then-adapt paradigm.

Transfer learning economics: A single pre-trained model can serve as the base for many fine-tuned variants covering different specialized tasks. Transfer learning via fine-tuning is economical for organizations with multiple use cases, avoiding the cost of pre-training a new neural network per application.

Catastrophic forgetting: Fine-tuning risks overwriting representations the model acquired during the pre-training phase. PEFT methods reduce this by leaving most pre-trained model parameters unchanged, preserving the model's broad capabilities.

Biases: Pre-trained models inherit biases from training data, which fine-tuning can amplify. Careful data curation and preference optimization are standard mitigations for production deployments.

Is Fine-Tuning Still Relevant?

Yes. While large pre-trained models handle many tasks zero-shot, fine-tuning consistently delivers better performance on specialized tasks and aligns model behavior with specific business objectives and safety requirements. In real world applications, the model's ability to generalize improves further when fine-tuning builds on a strong pre-trained foundation.

Efficient training fine-tuning methods like LoRA have made model specialization more accessible than ever — the standard approach for production AI models across industries from healthcare to autonomous driving.

Conclusion

Pre-training creates versatile pre-trained models with broad understanding of language and visual data. Fine-tuning then adapts these models to achieve optimal performance on specific tasks.

Together, the pre-training and model adaptation pipeline enables various applications across natural language processing, computer vision, and embodied AI at a fraction of the cost of training the model from scratch.

References

- DepthPro: Sharp Monocular Metric Depth in Less Than a Second. Bochkovski et al. (2024)

- LayoutLLM: Layout Instruction Tuning with Large Language Models for Document Understanding. Luo et al. (CVPR 2024)

- DocLayLLM: An Efficient Multi-modal Extension of Large Language Models. Liao et al. (CVPR 2025)

- SIMA 2: A Generalist Embodied Agent. Google DeepMind (2025)

- Fine-Tuning Large Vision-Language Models as Decision-Making Agents via Reinforcement Learning. Zhai et al. (2024)

- Large Language Models are Visual Reasoning Coordinators. Chen et al. (NeurIPS 2023)

- PaLM-E: An Embodied Multimodal Language Model. Driess et al. (2023)

See Lightly in Action

Curate and label data, fine-tune foundation models — all in one platform.

Book a Demo

Stay ahead in computer vision

Get exclusive insights, tips, and updates from the Lightly.ai team.

.png)

.png)

.png)