10 Best Data Curation Tools for Computer Vision [2025]

Table of contents

Here's a summary on the best data curation tools for computer vision.

In the world of AI, it's no longer just about hoarding mountains of data. The real magic happens when raw information is curated – meticulously organized and refined into a reliable, high-quality resource. This journey is absolutely vital for building top-tier computer vision models, as well-curated data can dramatically boost performance, slash errors, and save precious time.

- Why is smart data curation such a big deal for AI?

Forget simply throwing data at your models! Moving towards a data-centric strategy is now recognized as the secret sauce for AI success. Without thoughtful curation, models risk underperforming or learning biases. High-quality, relevant, and consistent data is the fuel that powers accurate, reliable, and efficient computer vision systems, making curation the unsung hero of the machine learning pipeline.

- What should you look for in a data curation tool?

Choosing the right tool can make or break your project. Keep an eye out for features that truly empower your workflow:

- Intelligent Data Prioritization: Can it smartly select the most valuable samples from huge datasets?

- Insightful Visualizations: Does it offer customizable views (plots, tables, embeddings) to help uncover biases, outliers, and edge cases?

- Model-Assisted Debugging: Can it help pinpoint data issues by showing you where your model struggles?

- Broad Modality & Annotation Support: Does it handle diverse data types (images, videos, medical scans) and all your annotation needs?

- User-Friendly Design & Automation: Is the interface intuitive, and can it automate repetitive tasks for efficiency?

- Collaboration & Integration: Does it support seamless teamwork and fit into your existing ML pipeline?

- So, how does data curation actually work?

It's a multi-step journey to transform raw data into a pristine resource:

- Collection: Gathering data from various sources.

- Cleaning: Removing errors, duplicates, and inconsistencies.

- Annotation: Adding crucial labels for supervised learning.

- Transformation: Converting data into suitable formats for algorithms.

- Integration & Enrichment: Combining data from multiple sources and adding valuable context.

- Metadata Management: Organizing "data about data" for discoverability and understanding.

- Validation & Privacy: Ensuring data quality and protecting sensitive information.

- Lineage & Maintenance: Tracking data's journey and keeping it current.

- Monitoring: Continuously overseeing the process for ongoing quality.

- Which tools are leading the pack for computer vision curation?

The market offers excellent options, each with unique strengths. Top contenders include:

- Labelbox: Known for its AI-driven model-assisted labeling and robust quality assurance.

- Labellerr: Offers high-speed, high-quality labels with advanced automation.

- Lightly: A standout for its AI-powered data selection and prioritization, using self-supervised learning to identify valuable clusters and drastically reduce labeling costs, even for millions of images.

- SuperAnnotate: A comprehensive platform with advanced annotation tools and human-in-the-loop validation.

- Mindkosh AI: Automates data preparation, including cleaning, transformation, and AI-assisted annotation.

- Clarifai: An enterprise-grade platform for end-to-end data management across multiple modalities.

- Superb AI: Simplifies dataset creation with similarity search and model-assisted debugging.

- Cleanlab: Specializes in diagnosing and fixing data quality issues using confident learning algorithms.

- Encord Index: Excels at visualizing, searching, and managing datasets, especially for complex use cases like medical imaging.

- DatologyAI: Focuses on optimizing training efficiency and reducing compute costs through automated curation.

Ultimately, the best tool will seamlessly align with your project's specific needs, budget, and team expertise, ensuring your data is always fit for purpose.

In the era of big data and artificial intelligence, effective data curation has become essential. Rather than focusing on just collecting huge amounts of data, the focus is now on organizing and transforming raw information into a refined, reliable resource that drives informed decision-making.

Data curation is especially critical in machine learning, ensuring data used for training models is accurate, relevant, and consistent. Moving from a model-focused approach to a data-centric strategy is increasingly recognized as the key to success in AI projects. Without proper data curation, models risk underperforming or producing biased results. On the other hand, well-curated data can significantly enhance model performance, reduce errors, and save valuable time.

In this article, we’ll explore the best data curation tools to bring out the best out of your data in 2025. We’ll also highlight the key features to look for when choosing a tool and outline the main steps involved in the data curation process.

What to Look for in a Data Curation Tool

When choosing a data curation tool, several key factors should be considered to ensure it meets the requirements of a project. Here are the elements to look for in a data curation tool.

- Data Prioritization: The tool should filter, sort, and select data, handling large datasets and automating prioritization tasks. This includes choosing the most relevant and valuable data samples.

- Visualizations: Look for customizable visualization options to better understand and analyze data. These should support formats like tables, plots, and images. Visualizing data helps identify biases, outliers, and edge cases.

- Model-Assisted Insights: The tool should support debugging by visualizing model performance. Features like confusion matrices can help identify problems. Model-assisted debugging helps identify errors in the data, such as false positives and negatives.

- Modality Support: Ensure the tool can handle various data types, such as images, videos, and medical imaging formats. Additionally, it should support different annotation formats, including bounding boxes, segmentation, polylines, and key points.

- Simple and Configurable UI: The tool should have a user-friendly interface that serves both technical and non-technical users and supports automated workflows and integration.

- Annotation Integration: Look for seamless annotation workflows that allow efficient creation, editing, and management of labels and annotations. Annotation and labeling are important for data curation in computer vision.

- Collaboration: Select a tool that supports collaboration among team members on data curation projects.

- Data Management: The tool should handle large image datasets efficiently, provide import and export functionalities, and have efficient organizational features.

- Automation: The tool should support automated processes that streamline workflows.

- Pipeline Management: The tool should integrate into machine learning pipelines and storage systems to provide efficient workflows.

What are the Main Steps of Data Curation?

The process of data curation includes steps to convert raw data into a valuable resource for analysis and machine learning. These steps ensure that the data is accurate, consistent, and appropriate for its intended use.

Here are the main steps of data curation:

1. Data Collection

This first step includes gathering data from different sources such as databases, websites, IoT devices, and social media. The data collected can be unstructured or structured. Identifying and collecting accurate and relevant data is important for decision-making and gaining business insights.

2. Data Cleaning

After collection, the data is cleaned to ensure its quality and accuracy. This includes handling missing values, removing duplicates, correcting inconsistencies, and removing outliers. Cleaning ensures that the data is reliable and ready for further processing.

3. Data Annotation

Data may need annotation depending on the machine learning task, which involves adding labels to the data. For example, in image recognition, images are labeled to identify objects, while in natural language processing, text is annotated to show parts of speech or sentiment. Annotation is crucial for supervised learning, where models rely on labeled examples which serve as the ground truth to learn.

💡Pro Tip: For data labeling tools, check out our comparison in the article titled 12 Best Data Annotation Tools for Computer Vision [Free & Paid].

4. Data Transformation

Cleaned and annotated data may need to be transformed into a format suitable for machine learning algorithms. This may include techniques such as one-hot encoding for categorical data, normalization or standardization for numerical data, or converting text into numerical sequences. Data transformation ensures that the data is compatible with the analytical tools and algorithms that will be used.

5. Data Integration

When data is collected from multiple sources, data integration is important to combine it into a unified view:

- Record Linkage: Identifying and matching records referring to the same entity across datasets to create a unified customer view from different systems.

- Schema Mapping: Resolving differences in data structures (schemas) between different datasets. This includes mapping columns or fields from one dataset to corresponding columns in another dataset.

6. Data Enrichment

Data enrichment includes adding more relevant information to existing data to improve its value and provide more context. This can be done by adding geographical tags to user call records or metadata tags to financial transactions. Data enrichment improves the accuracy of predictive models used in various applications.

7. Metadata Management

Metadata management is crucial for data curation. It focuses on the systematic organization of metadata that provides context about data. Metadata is often described as "data about data" and includes information that makes data more discoverable, understandable, and usable. Information Included in metadata typically includes:

- Location: Where the data is stored.

- Format: The data type (e.g., CSV, JSON, image, video).

- Purpose: What is the data used for?

- How it was collected: The method of data acquisition.

- Update frequency: How often is the data refreshed?

- Data definitions: Explanations of what each data element represents.

- Data lineage: Information about the origin of the data, its transformations, and dependencies.

- Data quality: Information about the data's accuracy, completeness, and consistency.

8. Data Validation

Implementing data validation systems to monitor the data's accuracy, completeness, and consistency is important. This ensures that the data meets the required quality standards, which is critical for generating reliable insights.

9. Data Privacy Enforcement

Protecting data privacy is important when dealing with sensitive information. It must be prioritized by providing secure access only to authorized users. This includes creating strong data governance policies, using encryption, and enforcing access controls.

10. Data Lineage Establishment

Data lineage describes the origin, structure, and dependencies of data. This includes tracking the data flow throughout its lifecycle, which helps identify and fix errors during data transfer and maintains data quality and traceability.

11. Data Maintenance

Over time, data may need updates or additional information. Maintaining the dataset ensures it remains relevant and valuable for ongoing machine learning tasks. This step is important for long-term data accuracy and usability.

12. Regular Monitoring

The data curation process must be continuously monitored to identify and resolve issues. This includes configuring metrics to measure data accuracy and using audits to implement improvements.

Top Data Curation Tools

Before we go into a detailed discussion of the data curation tools, here's a quick overview:

The following are some of the leading data curation tools:

1. Labelbox

Labelbox is a leading data curation platform that enhances the training data iteration loop. This loop includes the processes of labeling data, evaluating model performance, and determining the most important images. This iterative approach helps teams improve their machine-learning models by efficiently managing and refining their datasets.

Key Features

- Provide AI-driven model-assisted labeling features that suggest annotations based on previously labeled data.

- Helps in deciding which images are most important based on model performance.

- Built-in quality assurance mechanisms that enable users to review annotations for accuracy and consistency.

- Integration with machine learning frameworks.

- Provide visualization tools to help users better understand their data, simplifying trends and issue identification.

- Provides a comprehensive suite of annotation tools for various data types, including images, videos, and text.

2. Labellerr

Labellerr provides high-quality labels at a fast speed and supports different data types. It offers advanced automation capabilities such as prompt-based labeling and model-assisted labeling. Labellerr integrates with MLOps environments such as GCP Vertex AI and AWS Sagemake.

Key Features

- Automation streamlines the labeling workflow, reducing manual effort and human error.

- Automated QA processes improve data integrity and minimize manual verification.

- Facilitates smooth workflow from data preparation to model deployment.

- Enable users to generate synthetic datasets, reducing the dependency on real-world data collection.

- Helps users with valuable insights into their data and optimizes project workflows.

- Variety of annotation types (bounding boxes, polygons, etc.).

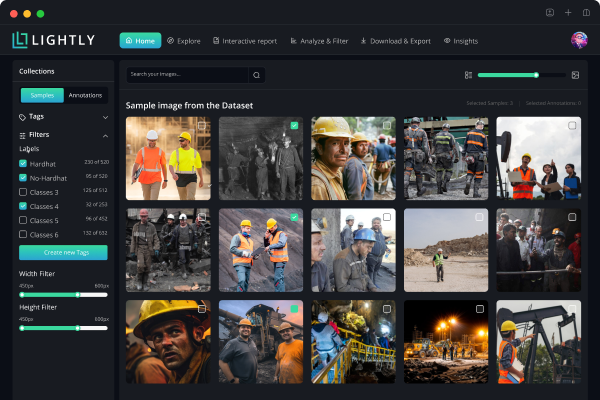

3. Lightly

Lightly is a powerful tool designed for managing large datasets. By leveraging self-supervised learning to find clusters of similar data and helps to select the most valuable data for labeling. This approach dramatically reduces labeling costs while ensuring high-quality data selection for training computer vision models. Lightly scales effortlessly, capable of processing millions of images, making it an ideal choice for optimizing machine learning workflows.

Key Features

- AI-powered data selection and prioritization.

- Intuitive data exploration and visualization tools for deeper insights.

- Open-source Python library, enabling seamless integration into existing workflows.

- Streamlines data organization for training computer vision models more effectively.

- Can be run locally or connected directly to cloud storage for automated processing of new data.

- Helps improve models by better understanding data distribution, identifying biases, and uncovering edge cases.

💡 Pro tip: Check out our list of Best Computer Vision Tools [2025 Reviewed]

4. SuperAnnotate

SuperAnnotate is a comprehensive data curation platform designed for computer vision and machine learning projects. It offers automated annotation features powered by AI to accelerate labeling tasks while maintaining high accuracy through human-in-the-loop validation. SuperAnnotate excels in complex image and video annotations, providing specialized tools for medical imaging and autonomous vehicles. The platform provides collaboration among teams, project scalability, and smooth integration with wide machine-learning frameworks.

Key Features

- Pre-labeling capabilities using automated tools to speed up annotation workflows and reduce manual effort.

- Automated quality checks, validation, and detailed performance analytics to maintain annotation accuracy.

- Advanced tools for pixel-perfect segmentation, video tracking, and 3D point cloud annotation.

- Comprehensive dashboards for tracking progress, team performance, and project metrics in real-time.

- Integration with popular ML frameworks and custom API support for workflow automation.

5. Mindkosh AI

Mindkosh AI is a data curation and annotation platform that uses AI to automate various aspects of the data preparation process. It provides data cleaning, transformation, and deduplication features as well as AI-assisted annotation.

Key Features

- AI-powered data cleaning, transformation, and deduplication.

- Data quality assessment and monitoring.

- Provides automatic OCR capabilities for extracting text from images.

- Support for various data types.

- Offers video annotation with automatic interpolation to predict object positions across frames.

- Integration with other data management tools.

6. Clarifai

Clarifai is an enterprise-grade platform designed to help organizations prepare, label, and manage training data for AI models. It offers an end-to-end solution for data labeling across multiple modalities, including images, videos, text, and audio. The platform allows teams to create custom workflows, maintain annotation standards, and scale data preparation efficiently.

Key Features

- Handles diverse data types with specialized annotation tools for each type.

- Built-in mechanisms for consensus scoring, review processes, and error detection to maintain data quality.

- Supports multiple users with different roles, permissions, and task assignment capabilities.

- Provides various annotation methods, including bounding boxes, polygons, semantic segmentation, and text classification.

- Offers API endpoints for seamless integration with existing ML pipelines and workflows.

7. Superb AI

Superb AI helps curate, label, and consume machine learning datasets. It supports similarity search, interactive embeddings, model-assisted data, and label debugging. It simplifies the creation of training datasets for image data types.

Key Features

- Visual data exploration and analysis tools.

- Automatically curate data with high-quality embeddings.

- Integration with machine learning frameworks.

- It supports common annotation types such as bounding boxes, segmentation, and polygons.

- Uses embeddings to group similar data points efficiently, helping users spot and curate low-value samples.

8. Cleanlab

Cleanlab handles data quality and data-centric AI pipelines, using ML models to diagnose data issues. Cleanlab provides an automated pipeline for data preprocessing, model fine-tuning, and hyperparameter tuning.

Key Features

- Uses confident learning algorithms to identify potential label errors and problematic samples in datasets without manual review.

- Provides a comprehensive analysis of dataset quality, including confusion matrices and class-level quality scores.

- Implements multi-fold cross-validation to ensure error detection and quality assessment.

- Automated workflow for fixing identified issues, including label correction and data point removal recommendations.

- Available as an open-source library.

9. Encord Index

Encord Index effectively manages data, allowing teams to visualize, search, sort, and control datasets. It supports natural language search, external image search, and similarity search. Encord Index directly integrates with datasets for labeling and automated error detection and is SOC 2 and GDPR compliant.

Key Features

- Has multiple metrics for understanding data.

- Can customize metrics to specific needs.

- Works well with medical images.

- Support for various data formats.

- Discover where models go wrong and pick the best data to label next.

10. DatologyAI

DatologyAI optimizes training efficiency, maximizes performance, and reduces computing costs through automated data curation. It supports different data modalities, such as text, images, video, and tabular data.

Key Features

- Use advanced algorithms to analyze data and identify the most relevant subsets for AI tasks.

- Suggests the most informative data points for human labeling to improve model performance using active learning workflows.

- Effectively curate data even without labels.

- Can be integrated with existing cloud or on-premise data infrastructure.

- Continuously monitors data and model performance, suggesting updates to curated datasets.

Data Curation vs. Data Cleaning vs. Data Management

Data curation, cleaning, and management are all essential aspects of data preparation for analysis and use. While these terms are used interchangeably, each has a distinct focus.

- Data Curation: This broad process includes organizing and maintaining data to make it usable and accessible for specific purposes. It includes data cleaning, transformation, creating metadata, and documentation.

- Data Cleaning: This subset of data curation focuses on improving data quality by removing errors and inconsistencies. It includes handling missing values, removing duplicates, correcting errors, and dealing with outliers.

- Data Management: This includes handling all data throughout its lifecycle while ensuring data integrity, security, and accessibility across the organization.

Data Curation Best Practices

To achieve effective data curation, consider the following best practices to maximize the outcome of data curation:

- Clear data governance policies: Data governance policies are important to ensure data is used responsibly and ethically. These policies improve consistency across the organization.

- Prioritize data quality over quantity: It is important to prioritize data quality over the amount of data. Inaccurate or incomplete data is not useful for analysis.

- Automate wherever possible: Automation can reduce the time and effort required for data curation and boost efficiency and scalability.

- Maintain data documentation: Create detailed metadata that provides context and understanding of the data.

- Ensure data security and privacy: Comply with security regulations and establish data governance policies to protect sensitive data.

💡Pro Tip: If your curation pipeline relies on manual or semi-automated labeling, our Lightly vs. Label Studio comparison will help you decide which tool integrates more effectively into modern ML workflows.

Challenges in Data Curation

Several challenges can arise during data curation. These include :

- Ensuring data quality: Maintaining accuracy, completeness, and consistency can be complex, especially with large datasets.

- Managing data diversity and avoiding bias: It is important to maintain data diversity and avoid bias to produce accurate and unbiased results.

- Handling the complexities of annotation and labeling: Annotating data can be time-intensive and technically challenging, particularly for large-scale datasets.

- Addressing data privacy and ethical concerns: When working with sensitive data, it is important to address data privacy and ethical concerns.

Conclusion

Data curation ensures accurate and reliable machine learning data through collection, cleaning, annotation, and maintenance. Effective data curation improves model performance and decision-making. Tools like Lightly.ai provide various features for data management, automation, and quality assurance. Choosing the right tools for the task can help overcome challenges in data curation and ensure the data is fit for use.

See Lightly in Action

If you're part of a busy machine learning team, you already know the importance of efficient tools. Lightly understands your workflow challenges and offers three specialized products designed exactly for your needs:

- LightlyOne: The comprehensive data curation platform, built to automatically select and manage high-value images, reducing labeling costs and increasing dataset quality.

- LightlyTrain: Empower your models with smarter training workflows using advanced embedding and clustering techniques to ensure robust model performance.

- LightlyEdge: Take advantage of powerful on-device inference and smart data filtering directly at the edge, optimizing your computer vision applications for speed and efficiency.

Want to see Lightly's tools in action? Check out this short video overview to learn how Lightly can elevate your ML pipeline.

Discuss this Post

If you have any questions about this blog post, start a discussion on Lightly's Discord.

See Lightly in Action

Curate and label data, fine-tune foundation models — all in one platform.

Book a Demo

Stay ahead in computer vision

Get exclusive insights, tips, and updates from the Lightly.ai team.

.png)

.png)

.png)