Data Preparation Tools for Computer Vision 2021

Table of contents

This article provides a data preparation tool landscape for computer vision. The intention is to give an overview of the available solutions which machine learning engineers can use to build better models.

Here are the key insights on the 2021 data preparation tool landscape for computer vision

- Why it matters: Data prep = ~80% of ML project work; high-quality data is critical for model success.

- Value-chain overview: Tools span 4 stages — Data Collection, Data Curation, Data Labeling, Model Training & Monitoring.

- Data Collection: Hardware (NVIDIA, Dell, ARM), storage (Seagate, Dell), synthetic data (CVEDIA, RabbitAI), and cloud storage (Google, AWS, Azure, plus innovators like Data Bricks).

- Data Curation: Lightly for smart data selection, DVC/Pachyderm/Roboflow for versioning, SiaSearch/Scale Nucleus for scene extraction, Brighter AI for advanced anonymization.

- Data Labeling:

- Software: Labelbox, Superb AI, Segments.ai, Diffgram

- Services: Scale AI, Appen, iMerit, Cloudfactory, Humansintheloop (niche)

- Quality assurance: Aquariumlearning, IncendaAI

- Model Training & Beyond: Active learning (Lightly, Alectio, Prodigy AI), monitoring & drift detection (Arize, Fiddler, Superwise.ai), compliance & auditing (Google framework, Yields.io, SAS).

- Conclusion: While labeling dominates the market, big gaps remain in curation, quality assurance, monitoring, and compliance — fertile ground for future innovation.

This article provides a data preparation tool landscape for computer vision 2021. The intention is to give an overview of the available solutions which machine learning engineers can use to build better models and be more efficient. Each segment is explained in detail below.

Hot, new AI startups are emerging daily. However, when it comes to actual enabling technologies for machine and deep learning, the resources are rather scarce. This is especially true regarding computer vision. That’s why I decided to build a data preparation tool and infrastructure landscape to provide insight into what products and technologies a company can use to make its machine learning pipeline more efficient.

💡Pro Tip: Choosing the right annotation tool is a key part of data preparation. Our Lightly vs. Label Studio comparison highlights how the two platforms differ in setup, automation, and scalability.

Why a focus on data preparation? Quite simply because data preparation usually makes up for 80% of the work in any machine learning project (see Cognilytica 2020). Thus, it is crucial to have the right tools at hand. Furthermore, an AI model can only be as good as the data it is trained with. A landscape that focuses on data in machine learning was, therefore, long overdue. This Reddit post I recently came across illustrates this need (see screenshot below).

Organizing along the value-chain

It is important to have a landscape that resembles the value-chain of a typical machine learning development pipeline. The reason for this is that (1) it’s easy to segment the tools according to their position in the value chain and (2) that everyone is already familiar with the workflow. There are 4 steps that are always the same for most machine learning projects (see illustration below).

Each of those steps again has different substeps, which I will be addressing in greater detail in the following dedicated sections beginning with data collection.

1. Data Collection

Data collection is the most critical step in the value chain. Of course, today, it is possible to use many of the free, academic, and public datasets for machine learning. A lot can be done with transfer-learning. Although, many of those public datasets are for non-commercial use only. That’s why if one wants to fine-tune their models for a specific application own data is required. This section seeks to have a more in-depth look into the tools required for this step.

Hardware

First and foremost, we require hardware to collect, store, and process data. Here we can differentiate between 3 groups: (1) capturing systems like for example, cameras, LIDAR sensors, or microphones, (2) storage systems (e.g., Dell, Klas), and (3) computing systems (e.g., NVIDIA, Dell, ARM). The first group is so vast that it deserves to be covered in an own landscape. That’s why I will refrain from elaborating and including capturing systems in this landscape and article since it would simply exceed the scope. (2) Specialized hardware for storage systems is for example provided by Dell, Seagate, Pravega, and Klas. (3) Specialized computing systems are for example provided by NVIDIA, Dell, and ARM.

Synthetic Data

Once we have collected our real-world data, we might notice that it is not enough data or certain cases are not covered sufficiently. For example, this can be true for autonomous driving, where accident scenes are rarely captured. Missing data can be generated with dedicated tools. For full-synthetic data, for example, there exists CVEDIA, for semi-synthetic data on the other hand one could use RabbitAI.

Cloud Storage

The most famous cloud storage providers are Google, Amazon, and Microsoft. Still, it’s important to be aware that there are other players which try to innovate through smart data lake solutions. Examples include Data Bricks or QuBole.

2. Data Curation

Data curation is an underrated step in the value chain. It’s a pre-processing step which is probably the reason why many jump directly to labeling and training where the more exciting action happens. The main goal is usually to get an understanding of what kind of data has been collected and curate it into a well-balanced high-quality dataset. However, the value which can be created through good analytics, selection, and management is tremendous. The mistakes that happen here will cost you dearly later on when low accuracy and high costs occur.

Exploration & Curation

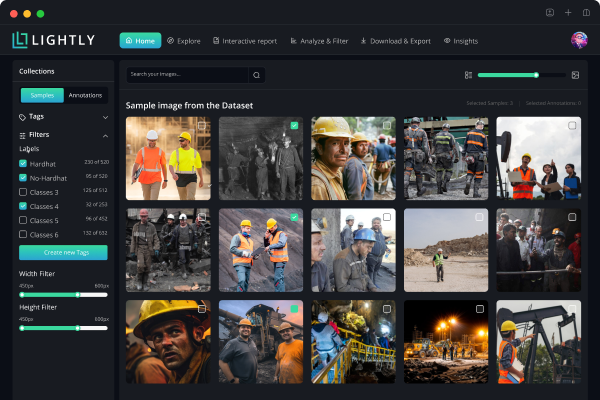

When it comes to exploration and curation of raw unlabelled data, the field is unfortunately still relatively empty. Data curation consists of tasks such as cleaning, filtering, and wrangling the data. The importance of this lies in the fact that many companies only work with around 1% of their collected data. Thus, this step is of tremendous importance, as this 1% (or any other percentage of used data) is then used to train models later. So, how can one determine which 1% to use? There are many traditional solution methods such as regressions (e.g., random forest) or primitive sampling methods such as random, GPS, or timestamp-based data selection. However, the only player in this field that currently offers an alternative is Lightly. Their AI-based solution offers analytics as wells as smart selection methods.

Besides Lightly, which can work on unlabelled data, there are industry-specific applications for industries such as autonomous driving, which makes of sensors data for scenario extraction (see the segment on Scene Extraction)

Versioning & Management

There are open-source solutions for data versioning such as DVC or proprietary solutions such as roboflow or Pachyderm, or Dataiku. It is important to emphasize that the supported data types vary as well as the providers' focus. Roboflow and Lightly offer comprehensive platform solutions which go beyond just versioning and management, while, for example, DVC has a very specialized focus on versioning only.

Scene Extraction

When it comes to Scene Extraction we see an emerging field. The most established players are SiaSearch, Scale Nucleus, and Understand.ai. This is also a task that is offered by many data annotation companies through human labor.

Anonymization

There are many open-source solutions (e.g., OpenCV) available for anonymization. There are also commercial providers such as Understand.ai which focus on faces and number plates. There are also efforts towards “smart” anonymization. This means instead of the typical blur, a synthetic overlay is added (e.g., faces or number plates) that doesn’t hurt a machine learning model's performance. As far as I am aware, the only company that is currently offering such a solution is Brighter AI.

3. Data Labeling

This is a fiercely competitive market. Many players have appeared in recent years — some of which even reached the eminent unicorn status, such as Scale AI. There are also some companies that have been around for a while such as Appen. If the market is assessed more closely three different segments can be identified: (1) labeling software providers, (2) labeling service providers, and (3) data quality assurance providers. However, it is possible that some players are active in several segments. When this was the case, I ascribed the company to its main business focus.

Labeling Software

A few years ago, building labeling software was mainly about offering a UI that made it as efficient as possible to draw bounding boxes on images. This drastically changed with the growing need for annotations of semantically segmented images and the upcoming of other datatypes such as LIDAR, medical image formats, or text. Today, the players differentiate along three axes. First, through industry specialization (e.g., 7V focused on medical data), and secondly, through automation innovation (e.g., Labelbox and Superb AI focus on automated annotation). Third, through application focus (e.g., Segments.ai focuses on image segmentation). Fourth, through their business model where, where the software — labeling interface and backend — is open-core with paid web platform and enterprise versions available (e.g., Diffgram).

Labeling Service

The labeling service provider market is, beyond the shadow of a doubt, the most crowded field with the biggest players. It is also the biggest market in terms of revenue of $4.1B by 2024 (Source: Cognilytica). Some eminent, large players are Appen, Samasource, Scale, iMerit, Playment, Cloudfactory. However, there are also smaller players, which focus more on niches such as Humansintheloop, Labelata, or DAITA.

Data Quality Assurance

An interesting new field that has appeared in the last year, which focuses on data quality assurance. The goal here is to find wrongly labeled data as well as other negative factors such as redundant data or missing data to improve the machine learning model's performance. There are players who offer this as a service, such as, IncendaAI or as a tool, such as Aquariumlearning.

4. Model Training

We have now collected, curated, and labeled our data. But, what happens with data after I have trained a model? The process is done, one could think, but that’s not true. Similar to software, machine learning models need to be updated — guess how — with data. So let’s have a look into the data tools that help us make this possible.

Model & Data Optimization

It’s crucial to validate and optimize the model as well as the training data prior to deployment. Areas that should be evaluated are model performance, model bias, and data quality. For this, it is crucial to understand where the model was uncertain or performed poorly. To update the model one can use different techniques such as visual assessment provided by companies such as Aquariumlearning or Active-Learning, which is provided by Alectio and Lightly. Active-learning is a concept to query for new samples you want to use for the training process. In other words, use the samples where the model performed poorly to find similar data to update the model and make it more robust. Some of the players in this field are Alectio, Lightly, Aquariumlearning, Prodigy AI, and Arize.

Model Monitoring

Once a model is deployed, the work continues. It’s possible that our AI system (e.g., autonomous driving) encounters scenes or situations it has never seen. This means that these scenes/samples were missing in the training dataset. This phenomenon is called data or model drift. It is a dangerous phenomenon and the reason why deployed models need to be continuously monitored. Fortunately, there are many players that offer such solutions: Arize, Fiddler, Hydrosphere, Superwise.ai, and Snorkel.

Compliance & Auditing

Unfortunately, compliance & auditing is a topic that is often neglected by the industry. The results are models where the implicit bias during data collection propagates all the way to the application. It is important to emphasize that there are no excuses for building and deploying models which are non-ethical and non-sustainable. Companies have to be aware of their responsibility and need to be accountable for developing deep learning-based products. If they do not act appropriately, they should be punished.

There are several initiatives from the industry for self-regulation which include the proposed framework from Google: “Closing the AI accountability gap: defining an end-to-end framework for internal algorithmic auditing”. Nine researchers collaborated on the framework, including Google employees Andrew Smart, Margaret Mitchell, and Timnit Gebru, as well as, former Partnership on AI fellow and current AI Now Institute fellow, Deborah Raji. However, there are also private companies that offer independent third-party reviews such as Fiddler, AI Ethica, Yields.io, and SAS.

Conclusion

In a nutshell, there are many amazing tools available that haven't been around just 3 years ago. Back then, companies had to develop all tools themselves, which made machine learning development incredibly expensive. Today, it’s possible to be much more efficient and sustainable thanks to the many providers. However, there are still large gaps to fill. The focus in recent years was heavily on data labeling software and data labeling services, which was fueled by large amounts of VC money. That’s why there are still areas for development, in the field of data curation, quality assurance, as well as model validation, monitoring, and compliance. Fortunately, there are already players working in that field to solve the new bottlenecks of AI.

I am looking forward to seeing many more appear this year.

Matthias Heller, co-founder Lightly.ai

PS: If your favorite tool is missing or you think a tool is misplaced, don’t hesitate to reach out to me.

Thanks to Mara Kaufmann, Philipp Wirth, Malte Ebner, Kerim Ben Halima, and Igor Susmelj for reading the drafts of this.

Contact Us

If this blog post caught your attention and you’re eager to learn more, follow us on Twitter and Medium! If you’d like to hear more about what we’re doing at Lightly, reach out to us at info@lightly.ai. If you’re interested in joining a fun, rockstar engineering crew to help make data preparation easy, reach out to us at jobs@lightly.ai!

See Lightly in Action

Curate and label data, fine-tune foundation models — all in one platform.

Book a Demo

Stay ahead in computer vision

Get exclusive insights, tips, and updates from the Lightly.ai team.

.png)

.png)

.png)