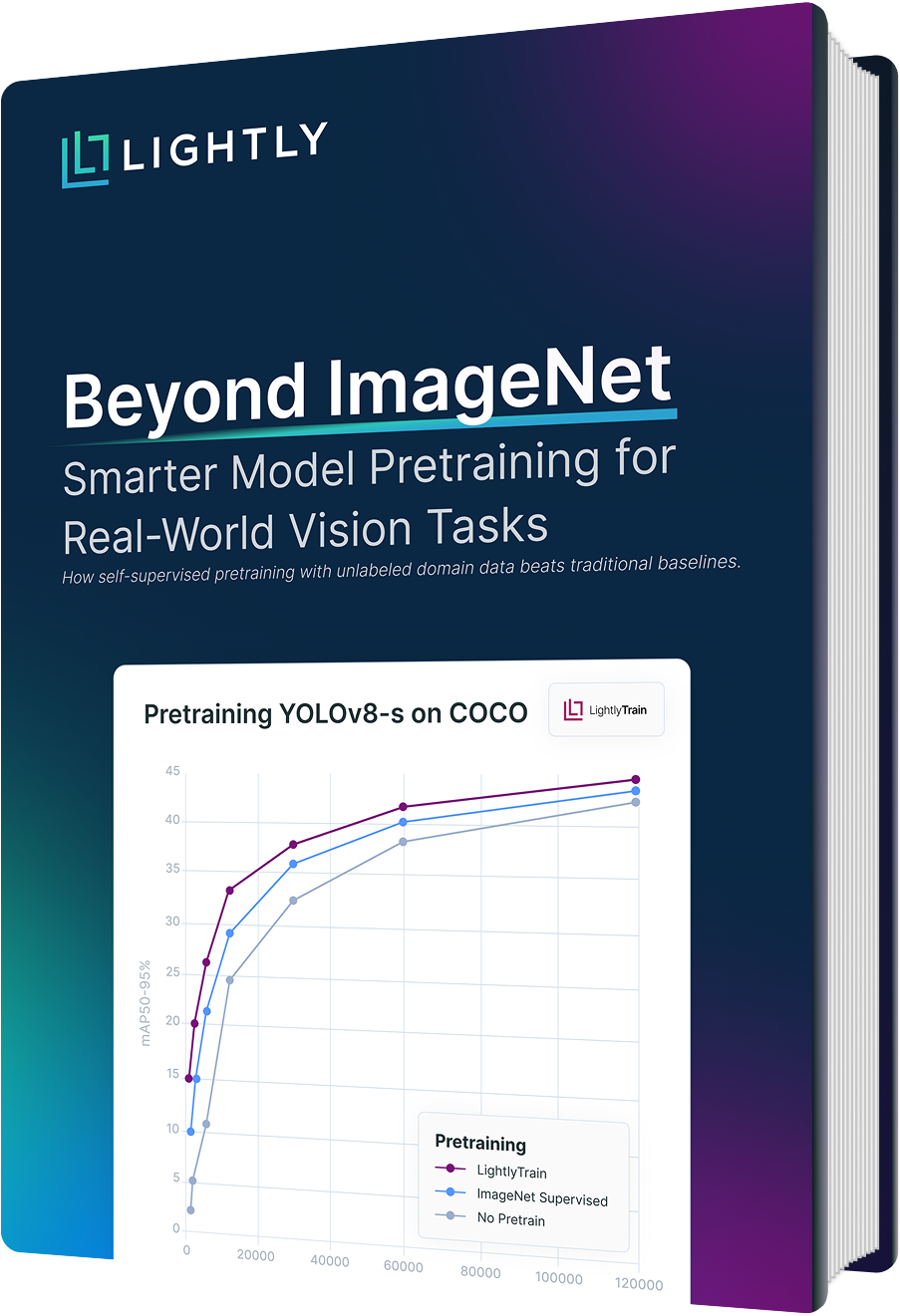

Beyond ImageNet: Smarter Model Pretraining for Real-World Vision Tasks

This guide shares benchmarks comparing domain-specific, self-supervised pretraining (e.g., with DINOv2) against ImageNet across popular models like YOLOv8, highlighting where traditional baselines fall short.

Download Free Guide

You Are Invited: Join Lightly's Private ML Engineering Discord

Want in? Collaborate with 300+ ML engineers optimizing data for their AI models.

You Are Invited: Join Lightly's Private ML Engineering Discord

Want in? Collaborate with 300+ ML engineers optimizing data for their AI models.

Gain insights into

Why ImageNet Isn’t Enough Anymore

Learn why generic ImageNet pretraining falls short for domain-specific tasks like medical imaging, agriculture, and autonomous driving. Understand the limitations of traditional transfer learning and why self-supervised learning (SSL) on unlabeled, in-domain data is a better fit.

Benchmark Results Across Domains and Models

Explore detailed performance comparisons between ImageNet, LightlyTrain, and training from scratch. See how LightlyTrain delivers consistent improvements across datasets (COCO, DeepWeeds, DeepLesion, BDD100K) and architectures (YOLO, RT-DETR, Faster R-CNN), especially when labeled data is scarce.

Implementing Domain-Specific SSL with LightlyTrain

Step-by-step guidance to integrate LightlyTrain into your workflow. Learn how to pretrain models on your own unlabeled data with minimal setup, then fine-tune for your specific application, boosting accuracy, efficiency, and label effectiveness.

Explore Lightly Products

LightlyTrain

Self-Supervised Pretraining

Leverage self-supervised learning to pretrain models

LightlyServices

AI Training Data for LLMs & CV

Expert training data services for LLMs, AI Agents and vision